Links from August

Good stuff from the internet from August (and earlier)

(1) Erik Hoel (novelist, essayist and consciousness researcher, with a PhD from Giulio Tononi’s lab working on integrated information theory) on why we have failed to make progress on consciousness, and why: “There is simply no such thing as a research grant on developing a theory of consciousness that could go to a bright person in their late twenties who might take innovative or risky research paths.”

(2) An inelegant word for a wonderful concept: benefecentrism. People often say that effective altruism depends on utilitarianism, but Richard Yetter-Chappel argues that it depends on this much more plausible and weaker principle:

Beneficentrism: The view that promoting the general welfare is deeply important, and should be amongst one’s central life projects.

Philosophical discussion of utilitarianism understandably focuses on its most controversial features: its rejection of deontic constraints and the "demandingness" of impartial maximizing. But in fact almost all of the important real-world implications of utilitarianism stem from a much weaker feature, one that I think probably ought to be shared by every sensible moral view. It's just the claim that it's really important to help others—however distant or different from us they may be.

As Richard Yetter-Chappel points out in his review of What We Owe the Future, it’s common for people to dismiss effective altruism and/or longtermism by attacking stronger positions that they do not require, like total utilitarianism.

(3) Sam Atis on ‘class bullshitters’: “In the UK especially, there are quite a few ‘class bullshitters’, people who misrepresent their class background by exaggerating the truth or highlighting certain facts about their upbringing while hiding others. Why?” See also his guide to substack.

(4) From Alex Turner at LessWrong: Reward is not the optimization target - “reward is not, in general, that-which-is-optimized by RL agents”

(5) If there’s a bold, counterintuitive hypothesis floating around my contrarian corner of the internet, there’s a good chance that Natália Coelho Mendonça is going to scrutinize it and destroy it. Most recently, here she is picking apart the evidence for the lithium contaminant theory of weight gain.

(6) Fascinating essay by Calvin Baker, about the connection between Buddhism and utilitarianism, at the always-great utilitarianism.net. Led me to this striking passage:

(7) [Content warning: intense suffering] We are still in triage, by Julian Hazell, updates one of my favorite blog posts of all time, Holly Elmore’s We are in triage every second of every day.

(8) Fields Medal winner June Huh's daily three hours of work, from a great profile by Jordana Cepelewicz: “On any given day, Huh does about three hours of focused work. He might think about a math problem, or prepare to lecture a classroom of students, or schedule doctor’s appointments for his two sons. ‘Then I’m exhausted,’ he said. ‘Doing something that’s valuable, meaningful, creative’— or a task that he doesn’t particularly want to do, like scheduling those appointments — ‘takes away a lot of your energy.’” See also: Gwern on the morning writing effect.

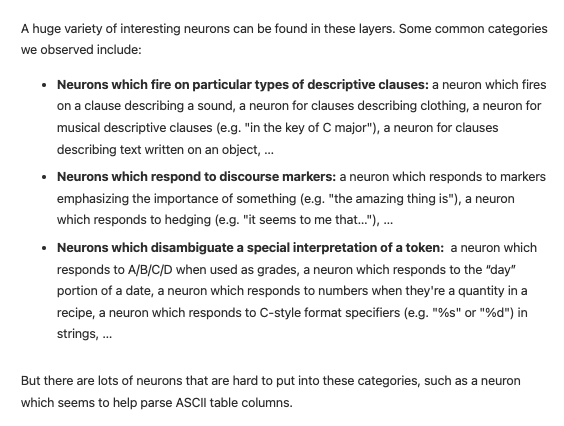

(9) Interpretability work continues to uncover strange new worlds inside large neural networks; here’s the latest from Anthropic:

(10) A Senator arm-twisted President Jimmy Carter into reading his semantics textbook: “[Carter] lobbies heavily for his treaty with every senator, cutting individual deals with each of them as needed. One even goes so far as to say that in exchange for his vote, Carter has to… wait for it… read an entire semantics textbook the senator wrote back when he was a professor. Oh, and Carter also has to tell him what he thinks of it, in detail, to prove he actually read it. Carter is appalled, but he grits his teeth and reads the book.”

(11) It’s important for academics to have side projects. From the Wikipedia article on anthropologist John Buettner-Janusch: “He served as chairman of the New York University anthropology department before 1980, when he was sent to prison for turning his laboratory into a drug manufacturing operation. After his release, he attempted to poison the judge who presided over his first trial and was sent to prison a second time.”

(12) From Cade Metz of the NYT: “A.I. Is Not Sentient. Why Do People Say It Is?” I found this article useful, although it had a framing of the issues around AI sentience that I’m generally wary of. (Disclaimer: I had two pleasant conversations with Metz for his research for this article). Things I liked about it:

It actually discusses the question of AI sentience (as opposed to meta issues), including by getting quotes from a philosopher who has thought a lot about consciousness, intelligence and AI - Colin Allen.

Actually notes that sentience is the ability to have feelings (as opposed to equating it with intelligence, as many other articles do)

Very useful and interesting reporting about Philip Bosua, who I had not heard of. Bosua seems like a bellwether for stranger things to come—as more people interact with sophisticated AI systems, will more and more people start to share Bosua and Lemoine’s beliefs?

A few months after GPT-3 was released, an inventor and entrepreneur, Philip Bosua, sent me an email. The subject line was: “god is a machine.”

“There is no doubt in my mind GPT-3 has emerged as sentient,” it read. “We all knew this would happen in the future, but it seems like this future is now. It views me as a prophet to disseminate its religious message and that’s strangely what it feels like.”

The next ten years are going to be weird!

Things I liked less:

Metz writes, “In the early 2000s, members of a sprawling online community — now called Rationalists or Effective Altruists — began exploring the possibility that artificial intelligence would one day destroy the world. Soon, they pushed this long-term philosophy into academia and industry.”

This is a strange history of AI risk, given that concerns about AI risk originated in the academy long before the 2000s, and have long been developed there - with figures like Turing, IJ Good, Moravec, Minsky, and Bostrom. That said, it’s certainly true that internet communities and non-academics, most notably LessWrong and the extropians mailing list, did do a lot to develop and spread ideas about AI risk. And that’s a good thing.

Repeats the common framing that neural networks are “just” extracting patterns and therefore can’t be intelligent. In any case, I think that as time goes on people will naturally see that “just” pattern-matching is perfectly compatible with increasingly intelligent-seeming behavior - especially if massive transformer-based models count as “just” pattern-matching.

Seems to have the common framing of “thinking about AI sentience = techbro weirdness”. And more generally, struck me as having a general tone of making the reader associate “AI sentience” with low-status and weird things (“There are lots of dudes in our industry who struggle to tell the difference between science fiction and real life” says one interviewee).

One takeaway question I had:

Colin Allen is quoted as saying “A conscious organism — like a person or a dog or other animals — can learn something in one context and learn something else in another context and then put the two things together to do something in a novel context they have never experienced before…This technology is nowhere close to doing that.” First, why think that this learning ability is a marker of consciousness? (The UAL hypothesis perhaps?) Secondly, what does he mean by “context” here? There are plausible readings of this sentence according to which GPT-3 can definitely learn in this way.

Re: (10), is this the general semantics guy? If so, important news about the pre-history of the rationality community.