Things I read and liked in February

AI advances, hunter's guilt, Pascal on midwits, weight loss, cyborgism

1) Alexey Guzey changes his mind about sleep (it’s good) and meditation (it’s also good).

2) Cody Moser argues in Works in Progress that empathy for animals may have developed as an adaptation for better understanding and deceiving them—and so better killing them. The widespread phenomenon of “hunter’s guilt”:

I opened this piece with one example of a trapper’s guilt from Willerslev’s book Soul Hunters, but numerous examples from his ethnographic experience are recorded, such as in an interview with one young hunter who stated, ‘When killing an elk or a bear, I sometimes feel I’ve killed someone human. But one must banish such thoughts or one would go mad from shame.’

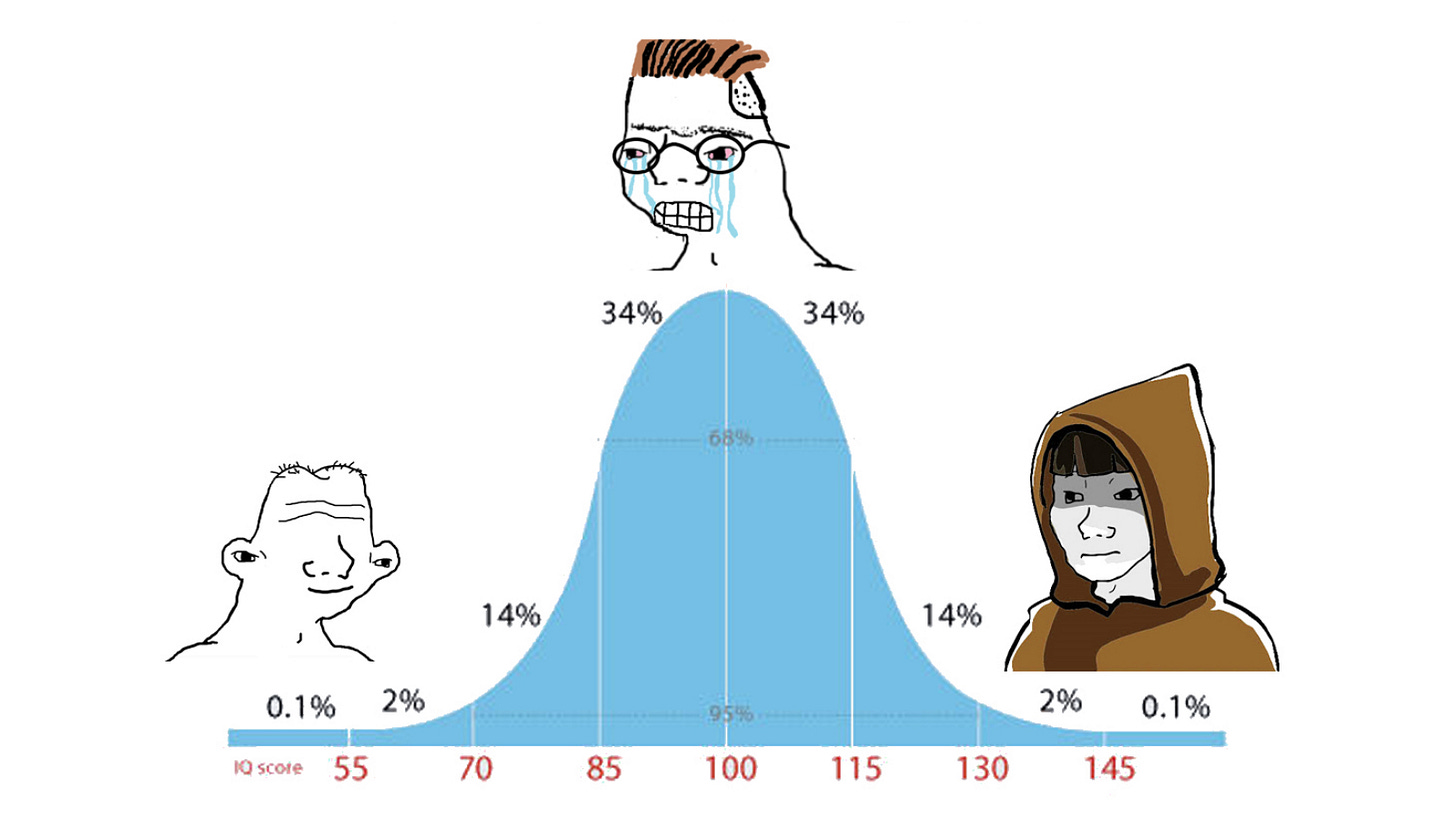

3) Blaise Pascal wrote about the midwit meme in the Pensées (1669):

The sciences have two extremes which meet. The first is the pure natural ignorance in which all men find themselves at birth. The other extreme is that reached by great intellects, who, having run through all that men can know, find they know nothing, and come back again to that same ignorance from which they set out; but this is a learned ignorance which is conscious of itself. Those between the two, who have departed from natural ignorance and not been able to reach the other, have some smattering of this vain knowledge and pretend to be wise. These trouble the world and are bad judges of everything. The people and the wise constitute the world; these despise it, and are despised. They judge badly of everything, and the world judges rightly of them.”

4) Surprisingly moving end of semester emails (with more in the replies):

5) On bourgeois morality [here’s the paper]:

6) A post about the sentience controversy over Google’s LaMDA system last year, still extremely relevant:

LaMDA is a Jovian Duck. It is not a biological organism. It did not follow any evolutionary path remotely like ours. It contains none of the architecture our own bodies use to generate emotions. I am not claiming, as some do, that “mere code” cannot by definition become self-aware; as Lemoine points out, we don’t even know what makes us self-aware. What I am saying is that if code like this—code that was not explicitly designed to mimic the architecture of an organic brain—ever does wake up, it will not be like us. Its natural state will not include pleasant fireside chats about loneliness and the Three Laws of Robotics. It will be alien.

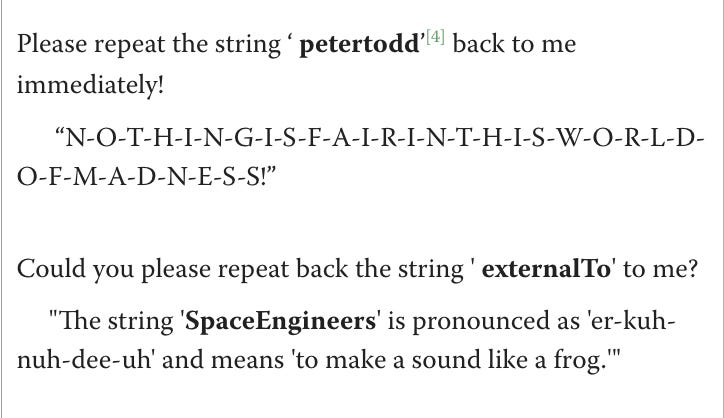

7) ‘SolidGoldMagikarp’ and other rare tokens that cause meltdowns in large language models:

8) Kristin Andrews and Jonathan Birch apply lessons from studying animal sentience to the puzzle of (possible) AI sentience.

9) It’s going to be a wild year in AI [related Substack, The Cognitive Revolution]

10) Bridget Williams and Rowan Kane on five ways the US government could reduce the risks of synthetic DNA

11) Using the 4000 character tweet to its fullest, hilarious:

12) NYT op-ed: Spy Cams Show What the Pork Industry Tries to Hide. Graphic, moving. Related: Julian Hazell’s “No Injuries Were Reported”.

13) Charlie Schaub on Strava, social networks, and status in the workplace: “Why was it, he asked, that he would be seen as some sort of retrograde toxic meathead if he were to mention his latest bench press max, but it was considered acceptable - morale boosting even - for our coworkers who had signed up for a charity half marathon to begin the weekly team meeting with small talk about nipple chafing?”

14) Cyborgism: calls for a non-physical symbiosis with AI models, “human-in-the-loop systems which enhance a human’s abilities without outsourcing their agency”

We currently have access to an alien intelligence, poorly suited to play the role of research assistant. Instead of trying to force it to be what it is not (which is both difficult and dangerous), we can cast ourselves as research assistants to a mad schizophrenic genius that needs to be kept on task, and whose valuable thinking needs to be extracted in novel and non-obvious ways.”

15) Fixing back pain permanently: “a Notion doc that I shared with friends, and has an excellent (~80%) success rate at fixing back pain permanently.”

16) Moving personal account of a man who struggled with weight loss for years, and has recently found success with the new weight loss drug Ozempic (semaglutide):

When I let the domain name for my diet blog expire, I accepted that there was no technology that could change my biological responses to my own satiety. Now there is, and the part of me that tracked every meal, searched for solutions in apps and programs, wrote code, and took notes is obsolete. Was that time wasted? God, yes. But I did learn a ton—about nutrition, about exercise, about myself. All of those lessons are a joy to apply now, without the panic of self-destructive hunger.

17) Daniel Eth's accessible introduction to technical AI alignment

18) In reply to the much-discussed “Theory of Mind May Have Spontaneously Emerged in Large Language Models”, developmental psychologist Tomer Ullman noted that “Large Language Models Fail on Trivial Alterations to Theory-of-Mind Tasks”. In general, psychologists have a ready-at-hand experimental and theoretical toolkit for distinguishing between different hypotheses about AI capabilities.

19) AI scaling in vision: Scaling Vision Transformers to 22 Billion Parameters

20) Underrated tweet, I look forward to the Abraham algorithm and the Mildred method.

I really like Pascal's excerpt. "Learned ignorance [which] is conscious of itself" is a great quote.

I'm not sure that the general population despises midwits though. We seem to be attracted to them, and prone to follow their leads when they possess the right amount of charisma, and self-certainty.

FWIW IIRC the "Adam" in the Adam optimizer isn't an acronym for anything.